AI and the Art of Looking for the Running Bird in Your UX Research

AI can help your UX research look flawless. But what if behind that polished surface, the insights that actually matter are quietly disappearing? Here's my most unexpected lesson from a zoo.

Why does the ostrich have wings if it cannot fly?

Last year, my niece, a crazy cat fan, insisted on going to the Saigon Zoo to see Mika the cat, but we ended up seeing a massive ostrich instead. Confused, she asked:

“Why doesn’t it fly? Is the running bird the wrong bird?”

We know that the ostrich is not a failure. But her question stayed with me long after we left the zoo.

How often do we, as adults, make the same mistake on a larger scale: We assume things are wrong just because they do not fit our ideal models?

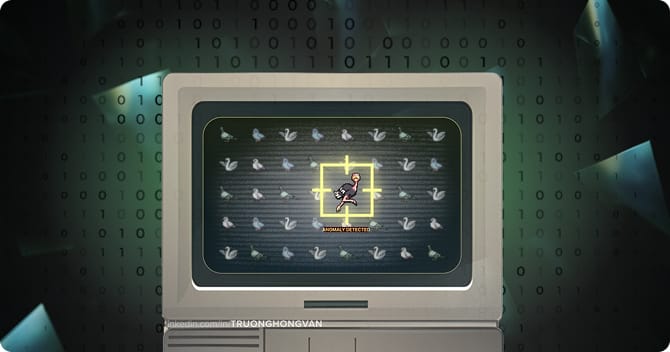

As a UX practitioner watching this industry change fast, I can’t help but wonder, are we getting too caught up in AI just for the sake of it? Especially in the Discovery stage: can these tools actually help us understand users better, or are they just “fixing” the ostriches because they do not look like the birds we expect?

Then one day, I looked at my user interview report.

Too clean to be true

Looming deadlines are exhausting and we all know it. While we’re under pressure to push screen after screen, feature after feature, stakeholders still expect tidy, well-reasoned results. That’s where AI feels like a lifesaver.

Or so I thought. Until AI flattened all the messiness of real human conversation, like the hesitant answers, the complex nuance… into extremely clean bullet points. Something felt off!

Before the AI-era, I’d sit for hours with recordings, digging through raw data and ‘boring’ columns. During that slow, repetitive process, I could feel the product’s energy, its rhythm, the pulse of real people behind the words.

Now, when I hand synthesis over to AI, I get reports that look almost too good. But we totally miss the kind of cracks for new light to flow in.

The cost of perfection

Perfection has no cracks. And without cracks, there’s nothing new to grow. The moment we let AI reshape the ostrich into the bird we expected, we lose three critical things.

First, we lose the depth of context

Body language and social norms are the profound pillars of human conversation. Multimodal AI systems can spot the clear emotions pretty well, but detection is not understanding.

A machine might record a three second silence, but it does not feel how awkward the room is. Is it anger? Confusion? Someone gathering their thoughts? The AI has no idea. AI defaults to the literal meaning because that is the statistically safe guess if it lacks this intuition.

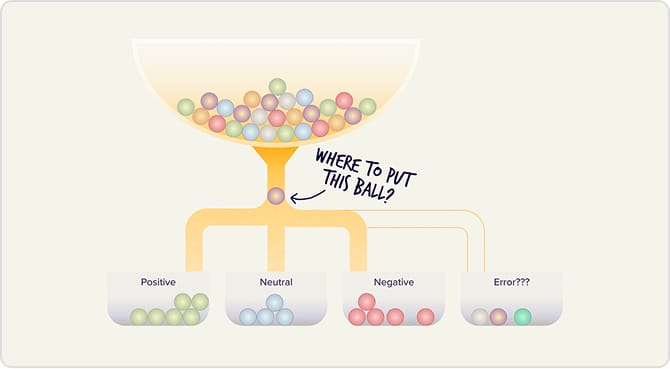

Second, we lose the spectrum of emotion

Humans experience many surprising and enriching ways of emotions, I mean, feelings mix together like paint to create dozens of different colors. AI tools often have trouble putting this range into fixed boxes.

For example, when a user says "I love it" while simultaneously abandoning the signup flow, the abandonment is the real signal. But AI trained only on text misses the behavioral contradiction. We strip away the resolution of human emotion and reduce a complicated mental state into a simple pattern.

Last but not least, the executive muscle could fall into dysfunction

Just as relying on digital maps makes you lose your sense of direction and stop memorizing routes, automating everything makes us lose our mental map. You might travel between Point A and Point B daily, yet never realize a Point C exists in between.

We often treat manual analysis as work to automate, but there is value in the struggle. The practice to strengthen your skills, the hard tasks to sharpen your mind, and the process to extract insights are things you need to get your hands dirty with.

My way to hunt the running birds

The solution isn’t to reject AI, but to use it intentionally, knowing when to let it lead and when to take the reins yourself.

Distinguish knowledge types to choose your approach

To work effectively with AI, you need to understand its boundaries. Knowledge Management Theory divides information into three tiers:

- Explicit knowledge: Written, structured facts – design guidelines, data logs, transcripts... AI masters this domain.

- Implicit knowledge: Practical know-how. The veteran designer’s internal library of shortcuts and unwritten workflows, built through years of repetition. This is the competitiveness of seniority.

- Tacit knowledge: The domain of heart and gut. The intuition that catches a user’s hesitation or the hidden friction in a polite refusal. Deeply personal and impossible to transfer via a manual. Gurus live here.

Hand the Explicit facts to AI. The Implicit and Tacit knowledge is yours to own. After all, nature never followed any strict rule that “birds must fly”, and that’s exactly why the ostrich exists.

Let AI process the typical so you can seek the weirdo

- Step 1: Ask your AI tool to map out the typical user journey based on best practices.

- Step 2: During research sessions, stop trying to validate this normal path. Instead, strictly document behaviors that deviate from it.

- Step 3: Keep your raw notes away from the AI synthesizer. Feed your observations back into the machine and your precious outliers will be statistically averaged into oblivion. Keep them raw.

- Step 4: Compare the AI’s expected outcome with your unusual observations. The tension between a mathematically completed task and a user’s furrowed brow is exactly where your design opportunity lives.

Responsibly fall in love with your mistakes

I still find profound meaning in the old ways: transcribing interviews by hand, tagging quotes one by one.

And somewhere in that traditional process, I also catch myself. “Did I lead them, or did they get there on their own?” That kind of reflection is hard when AI hands you a tidy interpretation. But it’s how you grow strongly as a Senior UX/ Product Designer.

In nature, diversity is the core standard, and innovation hides in what doesn’t fit. So let AI find the rule while you build what breaks it, and keep creating your own ostrich.

Footnote: An ostrich cannot fly

The scientists at Max Planck didn’t see a broken bird. They saw a question worth asking. By studying this, they invented BirdBot – a robot leg that requires fewer motors and less energy than any previous design.

If they had treated the ostrich as an outlier to be ignored, they would have missed the blueprint for the future of robotics.

Don’t let AI average your “ostriches” out of existence.

References

1. PetHelpful (2022). Unbothered Orange Cat Lives with Bear BFF in Vietnam Zoo

2. Mostert, W., Kurien, A., & Djouani, K. (2025). Multi-Modal Emotion Detection and Tracking System Using AI Techniques

3. Finet, A., Kristoforidis, K., & Laznicka, J. (2025). The Limits of AI in Understanding Emotions

4. Wikipedia (2023). Tacit Knowledge

5. Polanyi, M. (1966). The Tacit Dimension

6. Nonaka, I., & Takeuchi, H. (1995). The Knowledge-Creating Company: SECI Model

7. Max Planck Institute for Intelligent Systems (2022). BirdBot is energy-efficient thanks to nature as a model

8. Scientific American (2022). Birds Make Better Bipedal Bots Than Humans Do

9. Max Planck Institute for Intelligent Systems (2022). Meet BirdBot, an energy-efficient robot leg - research published in Science Robotics